Unlocking Faster Fuzzy Finding: How a Smart Work Queue Made fzf Even Quicker

- Part 1: How fzf Just Became Way More Memory-Efficient: The Bitmap Cache Upgrade

- Part 2: Unlocking Faster Fuzzy Finding: How a Smart Work Queue Made fzf Even Quicker

- Part 3: Speeding Up fzf: How a Tiny Change Made the World's Fastest Fuzzy Finder Even Faster

- Part 4: Fixing a Sneaky XSS Vulnerability in Hugo: Inside the Commit That Makes Markdown Rendering Safer

- Part 5: Fixing Syncthing’s REST API: Why “application/json; charset=utf-8” Was Wrong and How a Simple Commit Made It Right

If you’ve ever typed fzf in your terminal and instantly started typing to fuzzy-search through thousands of files, git commits, or command history, you know how magical it feels. fzf (fuzzy finder) is one of the most beloved command-line tools — lightning-fast, scriptable, and indispensable for power users.

But behind the scenes, fzf does a lot of heavy lifting to stay fast. The latest improvement — a commit from maintainer Junegunn Choi — makes its core matching engine even better. Let’s break it down step by step in plain English, with technical details and visuals so you can see exactly what changed and why it matters.

What is fzf, and why does its “matcher” matter? 🔗

fzf reads a huge list of items (files, history entries, etc.), applies your fuzzy pattern, and shows ranked matches. To keep things snappy even with 100,000+ items, it parallelizes the work across all your CPU cores using Go goroutines.

The matcher is the heart of this process:

- Input is split into “chunks” (batches of items).

- Multiple worker goroutines scan those chunks for matches.

- Results are collected, sorted (if needed), and returned.

The old matcher worked great… until it didn’t. That’s where this pull request (actually a direct commit: 92bfe68 ) comes in.

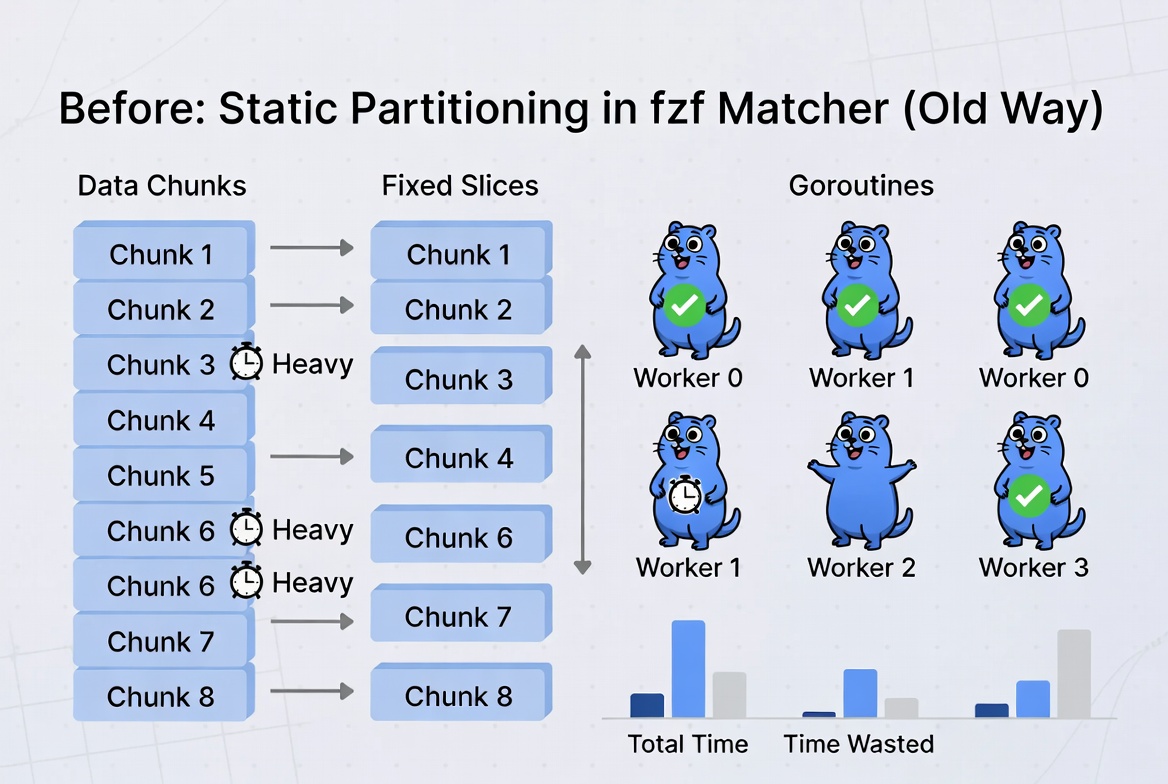

The old way: Static partitioning (the “pre-divide the pizza” approach) 🔗

Previously, fzf used static partitioning:

- It decided upfront how many “slices” to create (based on CPU cores × a multiplier, capped at 32).

- It chopped the list of chunks into fixed groups.

- Each worker goroutine got one entire group and processed it from start to finish.

Here’s what that looked like:

Problem? Load imbalance 🔗

Some chunks contain items that are slower to match (long strings, complex patterns, or just bad luck). One worker could be stuck for a long time while others sat idle. On machines with many cores, or with uneven data (like huge command histories), performance didn’t scale perfectly.

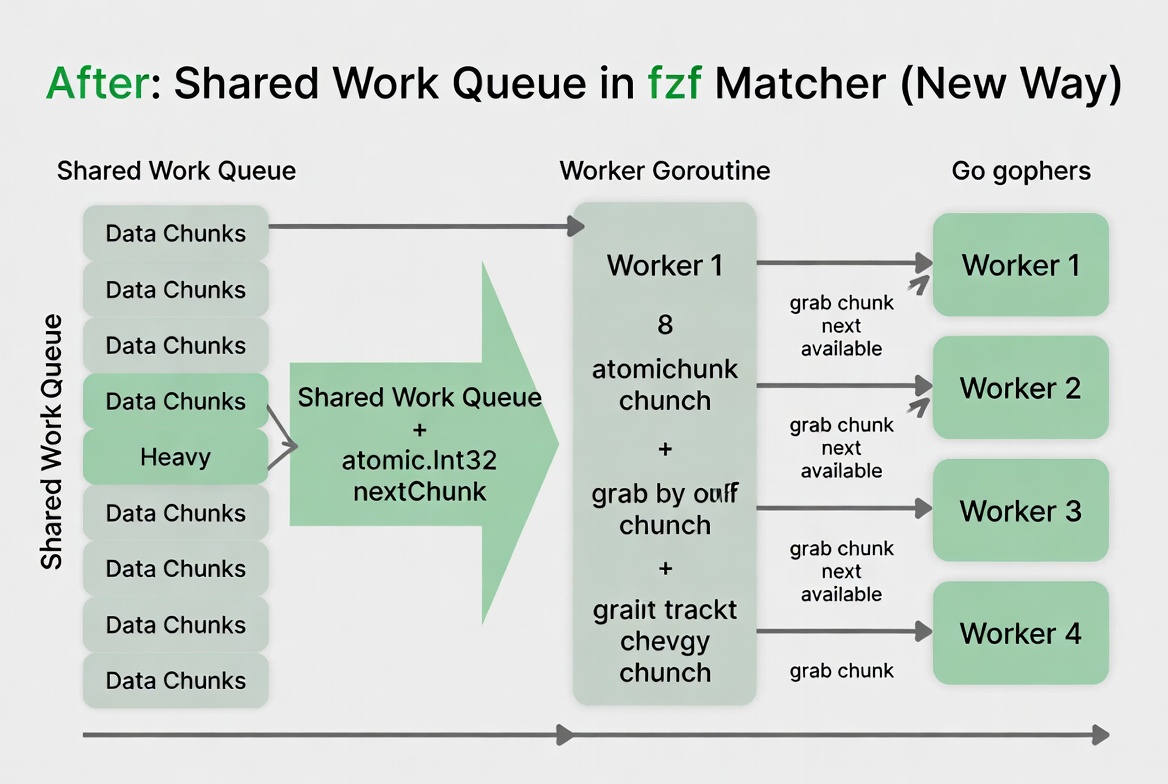

The new way: Shared work queue (the “self-service buffet” approach) 🔗

The commit replaces static slices with a shared work queue using a simple atomic counter.

Now:

- All chunks go into one big shared list.

- A small number of worker goroutines (equal to CPU cores or the

--threadsoption) start up. - Each worker repeatedly does: “What’s the next unprocessed chunk?” → grabs it → processes it → repeats until no chunks remain.

- An

atomic.Int32(thread-safe counter) hands out the next chunk index safely and quickly.

No more pre-dividing. Workers dynamically pull work as they finish.

Here’s the improved flow:

Result? Perfect load balancing. Every core stays busy until the very end.

What changed in the code? (Easy-to-read technical breakdown) 🔗

The changes were focused and elegant — classic Junegunn style:

1. Simplified constants (src/constants.go)

// Before

numPartitionsMultiplier = 8

maxPartitions = 32

progressMinDuration = 200 * time.Millisecond

// After (just the progress constant remains)

progressMinDuration = 200 * time.Millisecond

2. NewMatcher now uses CPU count directly (src/matcher.go)

// Before

partitions := min(numPartitionsMultiplier * runtime.NumCPU(), maxPartitions)

// After

partitions := runtime.NumCPU()

if threads > 0 {

partitions = threads

}

3. The big rewrite: scan() method

The old sliceChunks() function (which pre-divided everything) was deleted.

Instead, workers loop and grab the next chunk via nextChunk.Add(1):

numWorkers := min(m.partitions, numChunks)

var nextChunk atomic.Int32

// ...

for idx := range numWorkers {

go func(idx int, slab *util.Slab) {

for {

ci := int(nextChunk.Add(1)) - 1

if ci >= numChunks {

break // No more work

}

chunkMatches := request.pattern.Match(request.chunks[ci], slab)

// ... collect matches

}

// ... sort if needed, send result

}(idx, m.slab[idx])

}

The result collection and sorting logic was also cleaned up to work with the new dynamic model.

Why was this change made? (The “why” behind the commit) 🔗

- Better real-world performance — especially on multi-core machines and uneven workloads (common in fzf use cases like command history or large directory listings).

- Linear scaling with CPU cores.

- Simpler, more maintainable code — fewer magic constants and no complex pre-slicing logic.

- Future-proof — easier to add more workers or tweak concurrency later.

This landed in fzf v0.71.0 (April 2026). The changelog even includes benchmarks showing the win:

Performance improvements

The search performance now scales linearly with the number of CPU cores, as we dropped static partitioning to allow better load balancing across threads.

Example numbers for a large query:

- 1 thread: ~180 ms (no change)

- 8 threads: 1.84x faster than before!

The goal is achieved — fzf is now even more delightful 🔗

By the end of this commit, fzf’s matcher is:

- Faster on modern hardware.

- Fairer with real data.

- Cleaner under the hood.

You don’t need to change anything in your .bashrc or scripts — it just works better. Next time you blaze through a massive history | fzf or fd | fzf --preview 'bat {}', thank this tiny but brilliant shared-work-queue optimization.

Try it today 🔗

Update fzf (git pull && make install or use your package manager) and enjoy the snappier experience. The fuzzy finder you love just got a little smarter behind the scenes.

Happy fuzzy finding! 🚀

I hope you enjoyed reading this post as much as I enjoyed writing it. If you know a person who can benefit from this information, send them a link of this post. If you want to get notified about new posts, follow me on YouTube , Twitter (x) , LinkedIn , and GitHub .

- Part 1: How fzf Just Became Way More Memory-Efficient: The Bitmap Cache Upgrade

- Part 2: Unlocking Faster Fuzzy Finding: How a Smart Work Queue Made fzf Even Quicker

- Part 3: Speeding Up fzf: How a Tiny Change Made the World's Fastest Fuzzy Finder Even Faster

- Part 4: Fixing a Sneaky XSS Vulnerability in Hugo: Inside the Commit That Makes Markdown Rendering Safer

- Part 5: Fixing Syncthing’s REST API: Why “application/json; charset=utf-8” Was Wrong and How a Simple Commit Made It Right